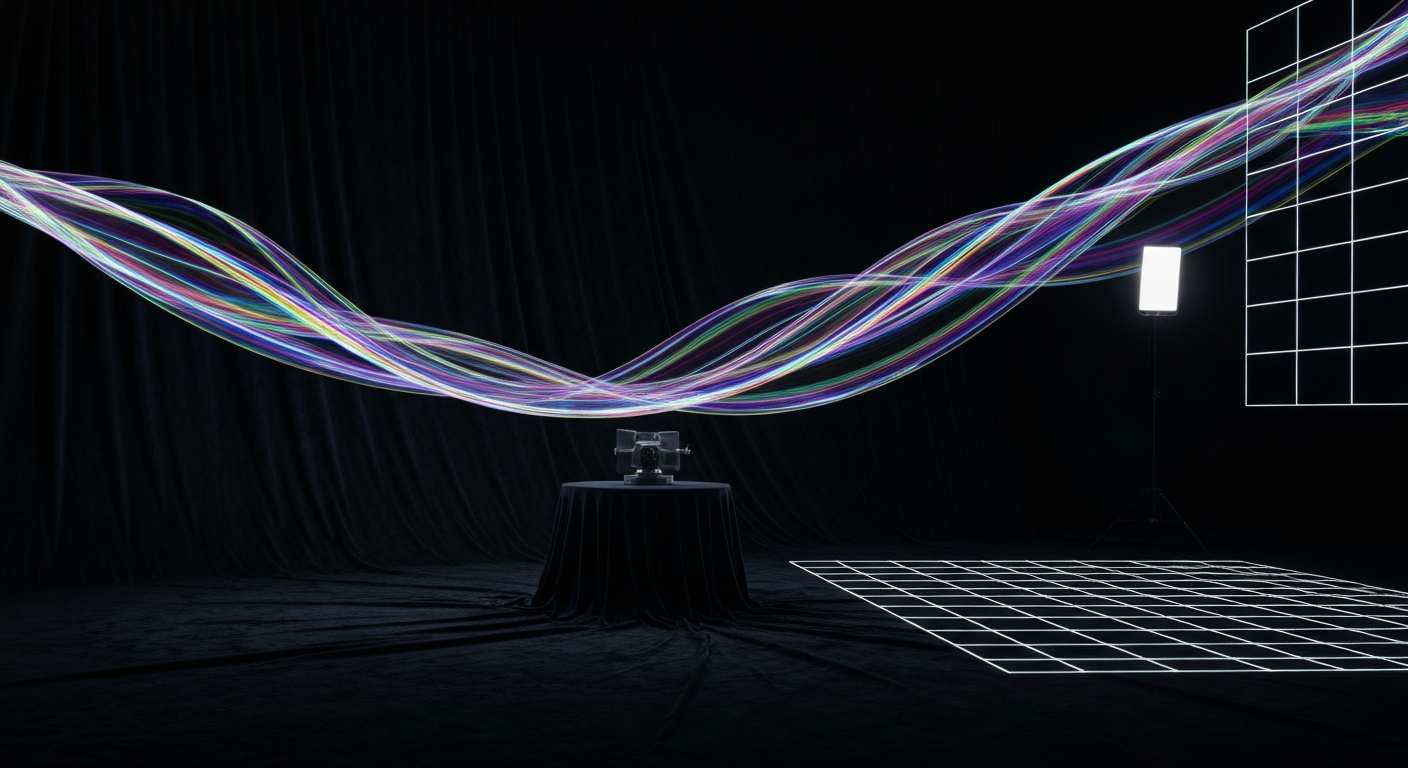

If you've ever watched a projection-mapped building breathe, a dome full of ink-in-water swirl into lightning, or a sculpture glow with a gradient that doesn't quite repeat, you've been watching shaders. Shaders are the quiet engine of every real-time visual effect in the immersive world, and understanding what they are - even at a high level - changes how you think about what the medium can do.

This post isn't a GLSL tutorial. It's a field guide for the non-programmer: what a shader is, why it's so much more powerful than a rendered video, and where the craft is heading.

What a shader actually is

A shader is a tiny program that runs on a GPU, once for every pixel the GPU draws, at a frame rate of 60 per second or more. It takes a handful of inputs - the pixel's position on screen, a time value, maybe a texture, maybe a set of parameters - and returns a color.

That sounds modest. It isn't. Because the GPU runs the same program on millions of pixels in parallel, a shader can describe almost any visual pattern procedurally. Waves, clouds, fractals, noise, distortion, light scattering, glass, rain, fire - all of these can be written as a mathematical function of position and time and then drawn by the GPU at 60 Hz.

The Shadertoy community has been demonstrating this for fifteen years now. You'll see a 200-line shader that renders a fully animated crystalline landscape with no textures and no 3D models - just math, running live. That same math is what drives most of the interesting real-time work in immersive installations.

Why shaders beat pre-rendered video for installations

Pre-rendered video has obvious strengths: you can throw a render farm at it, refine every frame, get deterministic results. For a film or a cinema show, that's the right choice. For an installation that runs for months, a shader has three advantages pre-rendered video cannot match.

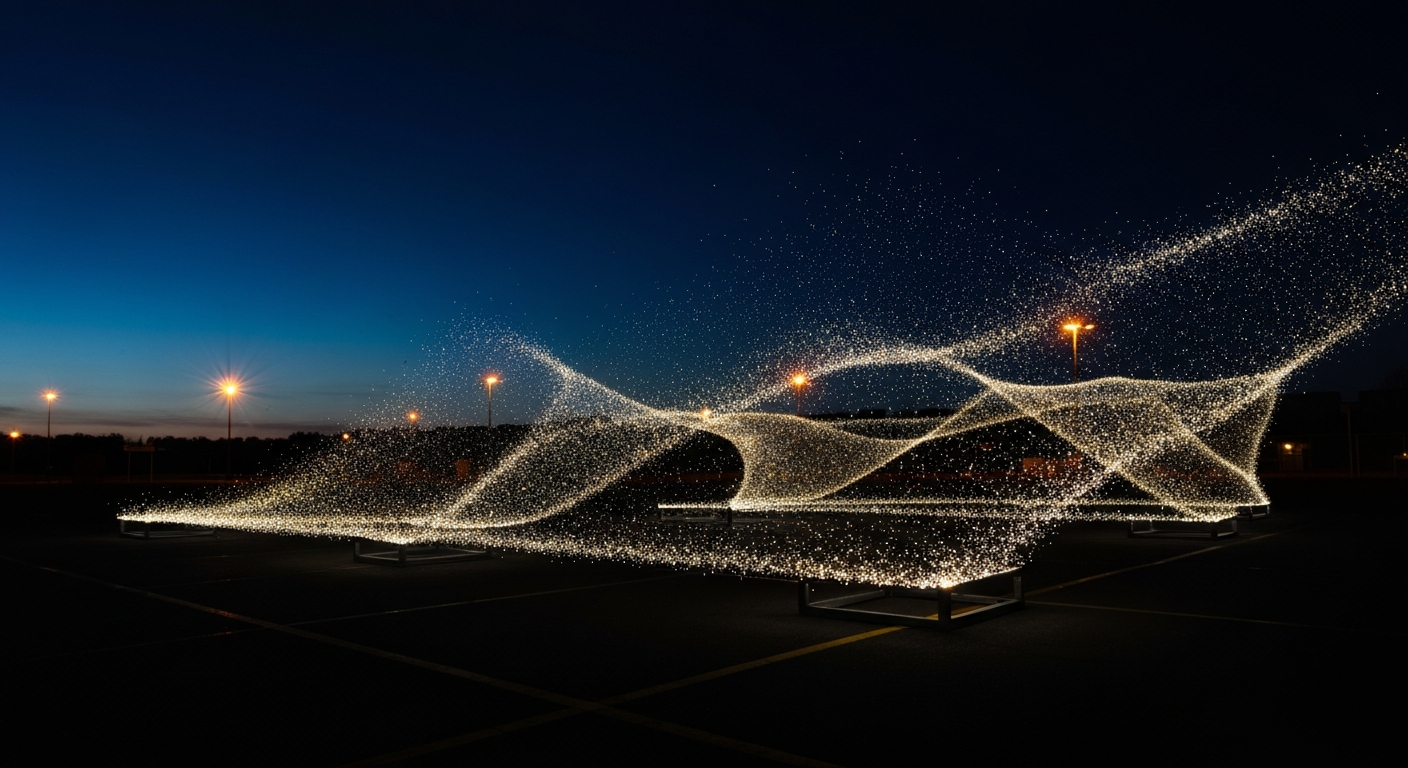

- It never repeats. A shader that's driven by noise functions and time will never play the same frame twice, which means visitors who come back don't see the same loop.

- It reacts. A shader can take microphone input, camera feeds, crowd density, weather data, or anything else you can get into the GPU as a uniform, and let that data shape the output.

- It's compact. A 5-minute 4K pre-rendered video is gigabytes. The shader that generates an equivalent-looking scene is a few kilobytes of code plus a noise texture.

The tools that wrap shaders for non-programmers

Writing raw GLSL isn't for everyone. Most of the practical work in the immersive space happens inside node-based shader tools that wrap the math in visual graphs. The big three:

Notch is the current industry-standard for live-event real-time VFX - a node graph that compiles to an efficient GPU pipeline and drives everything from festival visuals to brand-activation mapping. TouchDesigner is the same idea but extended beyond visuals, with first-class support for audio, sensors, and control surfaces, making it the default for interactive installations and long-running generative works. Unity's Shader Graph and Unreal's Material Editor do the same job inside their respective engines for anyone whose pipeline centers on a 3D runtime rather than a live performance tool.

All four of these output the same thing underneath: a shader program the GPU executes. The interface differs; the GPU math doesn't.

What shaders can't do

Shaders are not magic. They struggle with anything that requires true randomness (they're deterministic), anything that needs a lot of state carried across frames (GPUs are parallel, not sequential), and anything that's fundamentally model-based - human faces, photorealistic hair, specific branded objects - where a pre-rendered or geometry-based approach is still the right tool.

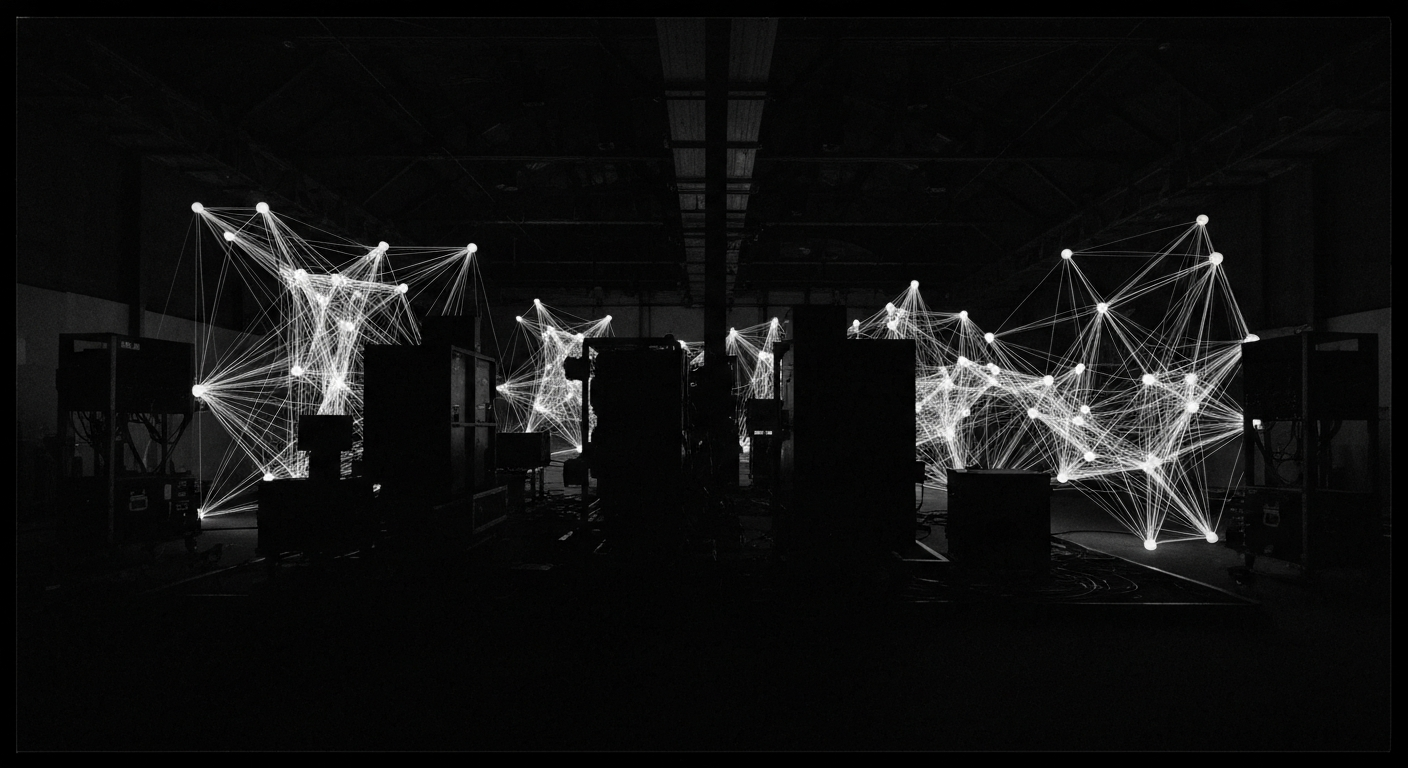

The most effective immersive content in 2026 is almost always hybrid: pre-rendered hero moments, shader-driven ambient material, 3D model elements for anything that needs identity. Purists on either side tend to deliver work that's either dead on the loop or missing the brand thread. Craft is knowing when to reach for which tool.

A shader is the cheapest way to put infinite variation on a surface. It's also the cheapest way to make everything look like a shader. Use the tool; don't be the tool.

If you want to go deeper

Inigo Quilez's articles are the single best on-ramp to shader thinking - years of worked examples from signed-distance-field geometry to noise and domain warping. The Book of Shaders by Patricio Gonzalez Vivo is the most approachable introduction if you want to write one. The Notch Learn series is the fastest way to get from zero to a working real-time visual for a live show.