The AI wave hit visual production hard in 2023-24, and by 2026 the dust has settled enough to separate the tools that changed how studios work from the ones that were good demos and bad products. This is an honest accounting - what's in our content pipeline, what got tried and discarded, and what's still overpromised relative to what it delivers.

The clear wins

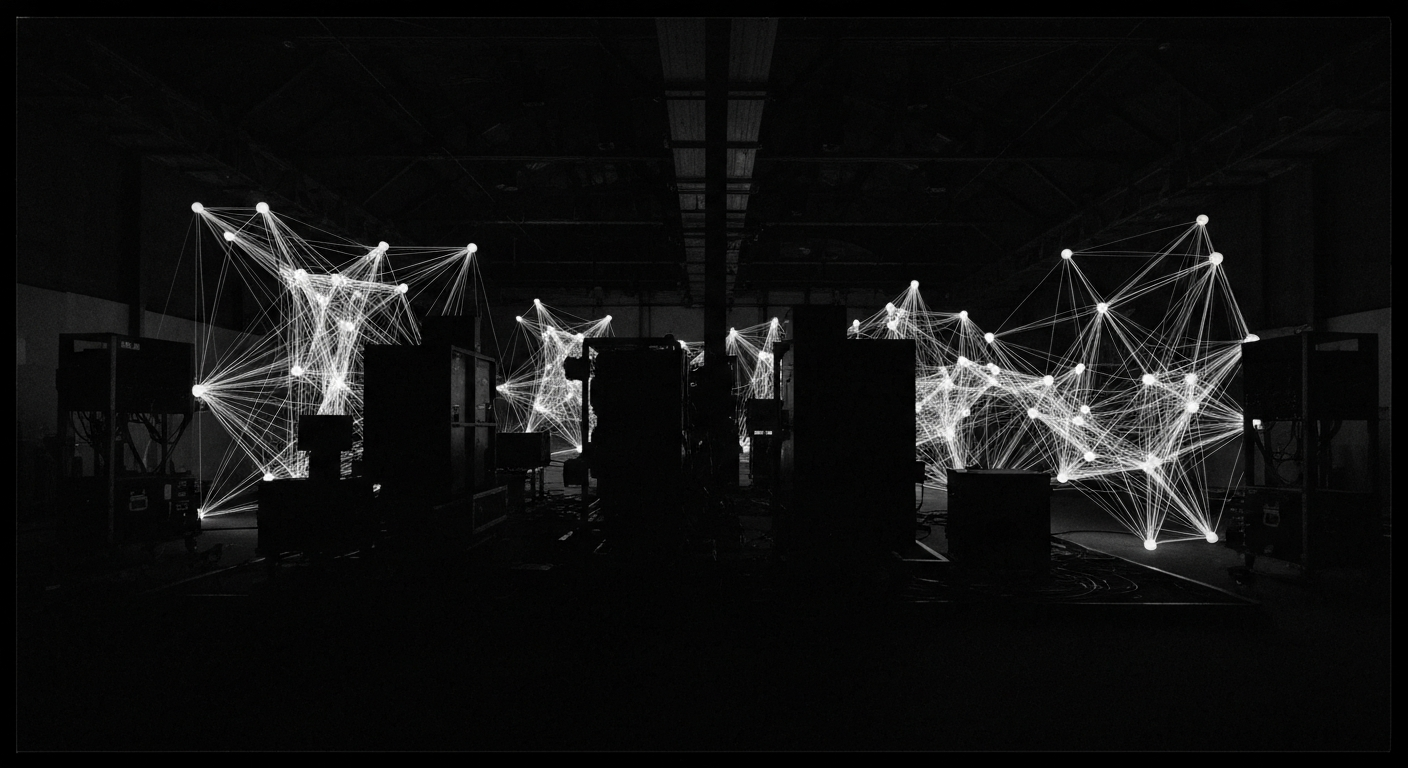

Image generation for concept and moodboard work is the obvious hit. Midjourney, Firefly, and the current crop of fine-tunable open models have collapsed what used to be a week of art-direction iteration into hours. The generated images are almost never final deliverables, but they're the fastest way to align a team on visual direction the content has to hit. This is now a standard step in most studio workflows.

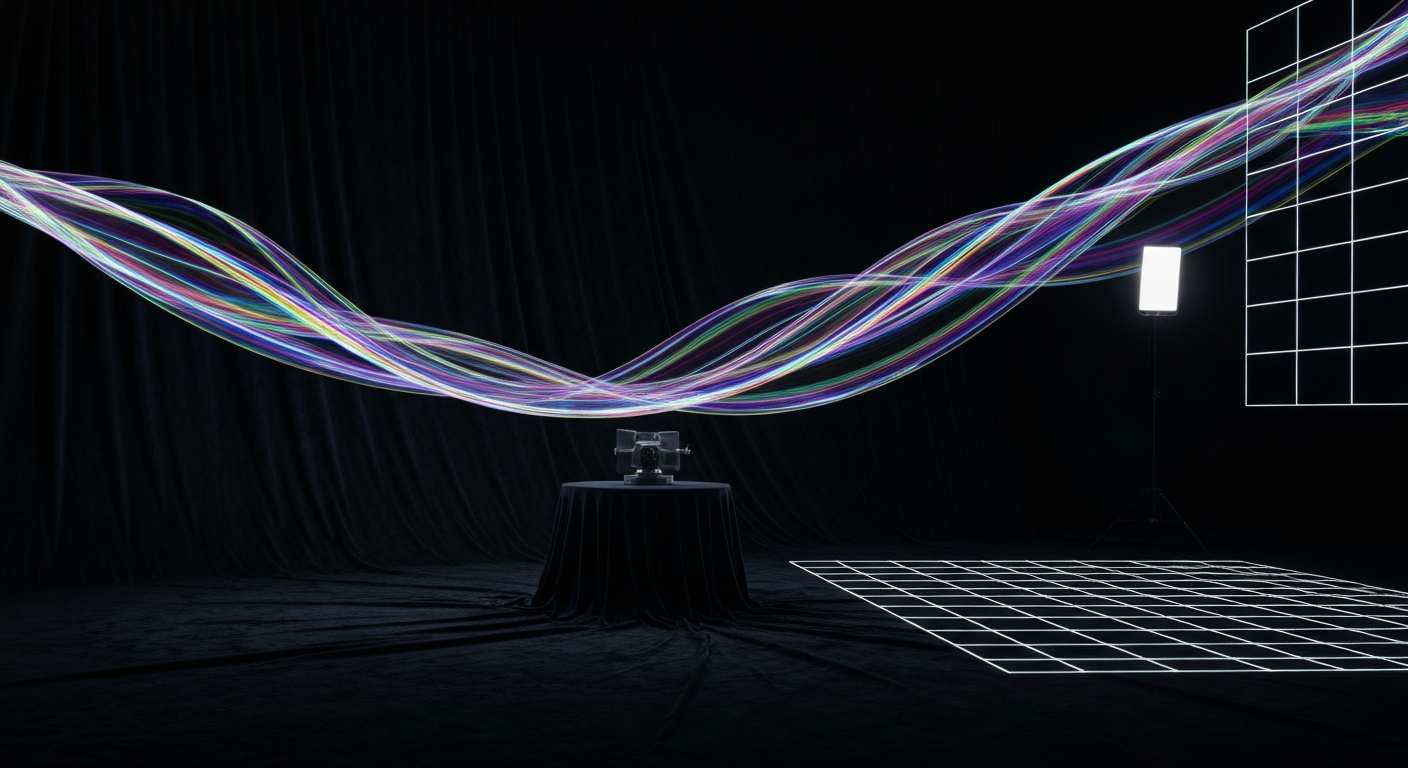

Upscaling and frame interpolation are the second clear win. Topaz Gigapixel, RTX Video Super Resolution, Flowframes - all of these turn lower-resolution or lower-framerate source material into usable masters for large-format delivery. A tool that takes 1080p source and produces clean 4K output is doing real work that used to cost real money.

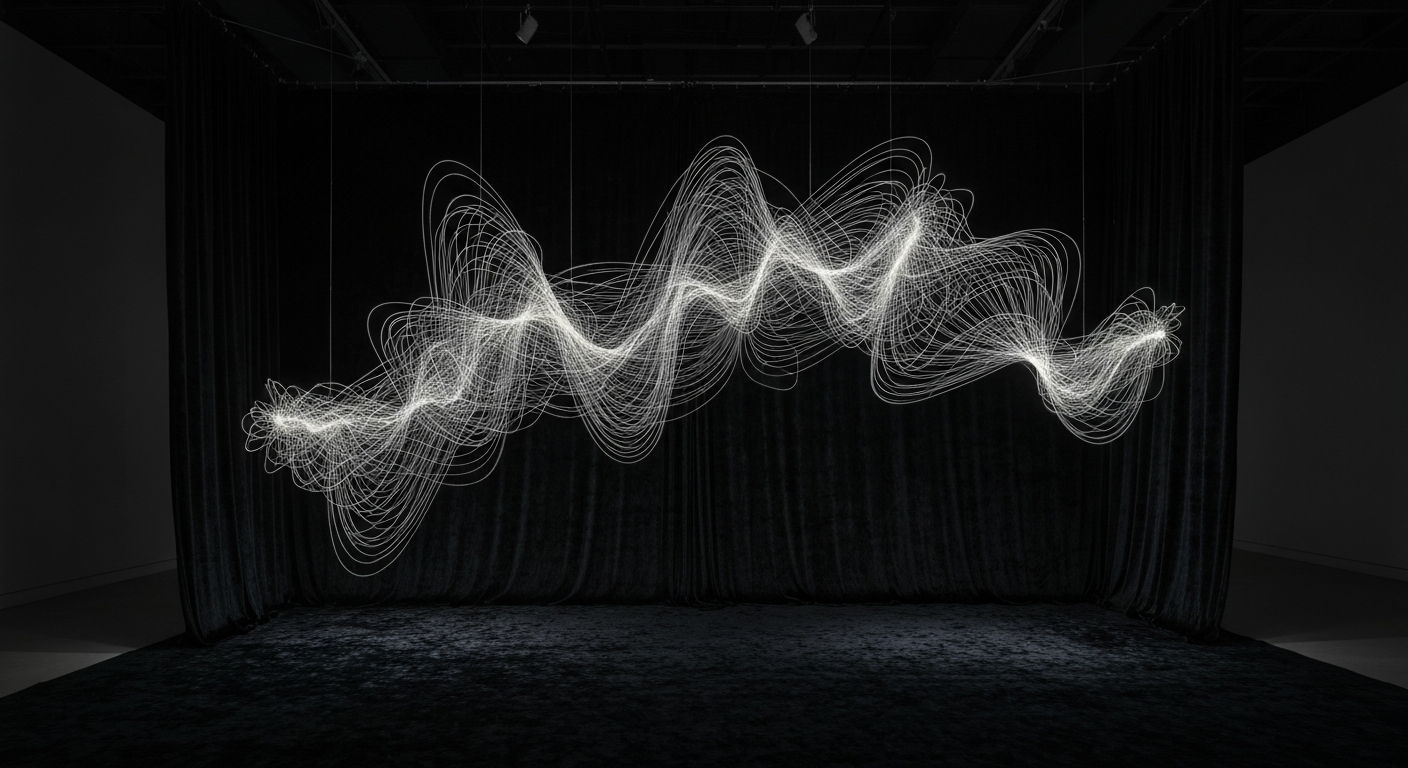

Style transfer and regen tools (Runway, Kaiber, the various Stable Diffusion video extensions) are useful in specific niches - consistent stylized treatment across long sequences, rotoscope-style work, motion-graphics backgrounds. Not a general-purpose content pipeline, but a legitimate specialist tool.

The mixed bag

Text-to-video tools (Sora, Runway Gen-3, Pika) generate impressive demo reels and land badly in production. The output is unreliable, the characters drift, the camera moves are uncontrollable, and the resolution is well below what large-format installations need. These tools have a role as concept generators and B-roll sources, but they haven't replaced any step in real production.

AI-generated audio - voice, music, effects - is further along than AI-generated video, and shows up in production more often. ElevenLabs for voiceover, Suno and Udio for stock music, various commercial libraries for effects. Quality is good enough for most event applications; good enough for broadcast is another step up, but coming.

The overpromised

Anything advertised as "end-to-end AI production" in 2026 is still a pitch deck. AI tools are excellent components in specific pipeline stages and mediocre replacements for human craft at the pipeline level. The studios getting real value from AI are using it as a power tool inside otherwise-traditional workflows, not as a wholesale replacement for the workflow.

Automated editing, auto-storyboarding, and "AI art director" products have mostly not delivered. They produce output that looks like competent pastiche and lacks whatever creative direction made the reference material good to begin with. For installation work where the visual direction is the product, these tools are still more distraction than lever.

Where to actually invest attention

- Text-to-image for concept iteration. A month of team practice pays for itself in faster direction alignment.

- Upscaling pipelines for large-format delivery. Any studio doing above-HD work should have this dialed in.

- AI voice for previz and rough narration. Final narration still benefits from a real performer.

- Image in-painting and retouching for content cleanup. Faster than Photoshop for most correction tasks.

- Real-time style transfer on live camera feeds for interactive pieces. Novel use of the tech that's hard to do any other way.

What isn't worth investing in yet: fully-automated content pipelines, text-to-video as a primary production tool, AI "show design" products. These are a year or two away, and chasing them now is expensive.

The studios that absorbed AI into their craft pulled ahead. The studios that tried to let AI replace their craft fell behind. The difference is whether you hired it as a tool or mistook it for a replacement.

The bigger shift

The real effect of AI on content production in 2026 isn't any single tool. It's that the bottom of the content market has gotten much cheaper - "good enough" content for low-budget uses is now genuinely cheap to produce. This means premium content work has to be genuinely premium, distinguished by craft, direction, and integration that AI cannot replicate. The bar for "professional" has risen, and the work worth paying for is the work that clearly couldn't have been generated.