Generative art and machine learning grew up in parallel and have only recently started converging. Generative art has traditionally meant hand-written shaders, L-systems, particle simulations, agent-based flocks - deterministic math running live. Machine-learning models are stochastic, data-driven, and until recently too slow to run at performance frame rates. Both of those constraints are falling, and the result is a new generation of real-time generative work that couldn't exist five years ago.

Models small enough to run live

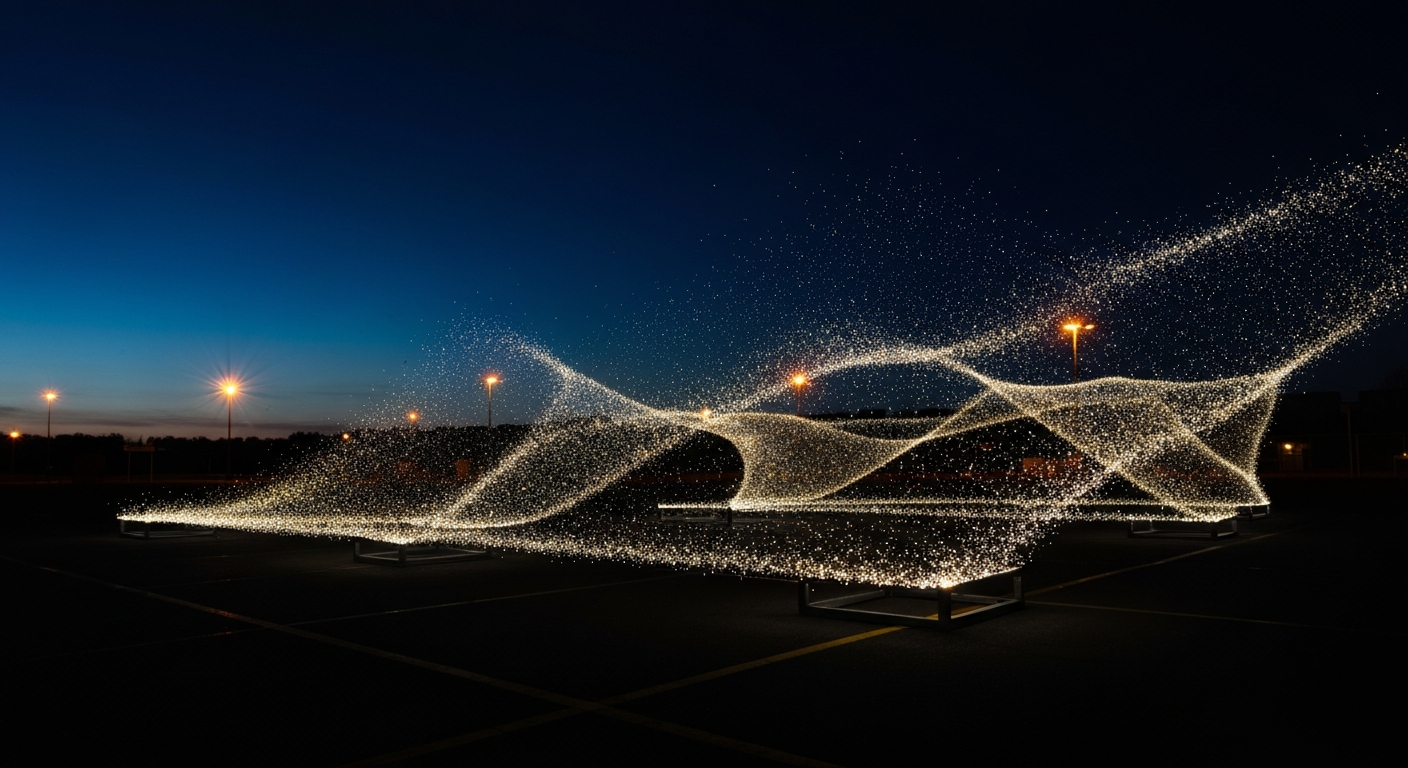

The practical constraint on ML in real-time generative work has always been inference speed. A model that takes 300 milliseconds to run is useless for a 60-fps installation. The 2024-25 wave of small, efficient generative models - SDXL Turbo, LCM-based distilled models, on-device Stable Diffusion, real-time style transfer - changed that. It's now routine to run image generation at 15-30 frames per second on consumer GPU hardware.

The output isn't film-quality, but for an installation where the image is being projected on a wall or displayed on a large LED, the fidelity gap matters less than it would on a cinema screen. The tradeoff is almost always acceptable for the capability you gain: content that responds to input in ways hand-written shaders can't match.

What ML unlocks that shaders can't

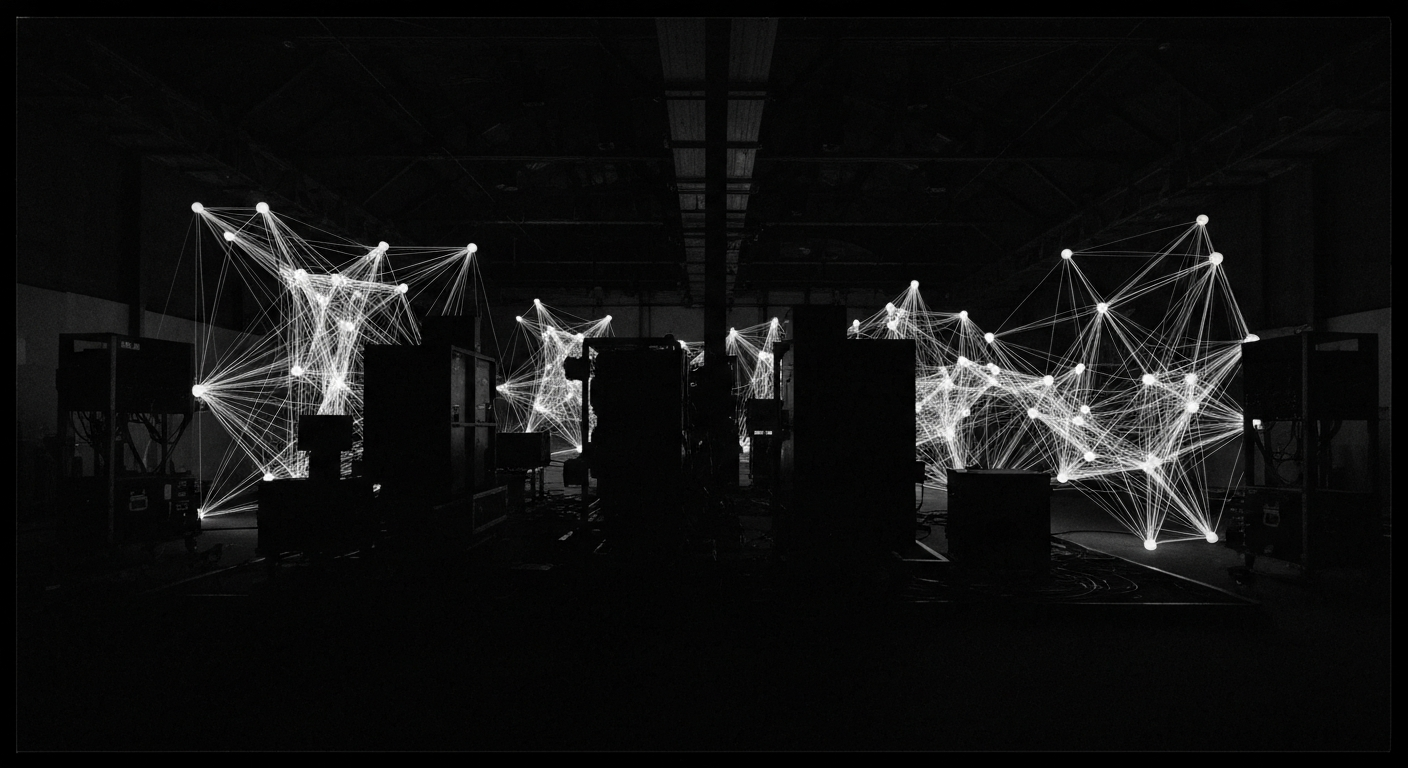

Shaders are good at abstract, procedural, mathematical imagery. They're bad at semantically-specific imagery - a shader cannot easily generate "a photograph of a lion" or "a watercolor of a face." ML models are the opposite: they're good at semantically rich imagery and bad at the long-tail mathematical control shaders excel at.

The implication for generative work is that ML enables a category of installation that was previously impossible: imagery that responds semantically to input. A room that generates imagery matching what visitors are saying. A wall that paints scenes based on the weather outside. An installation that reacts to the actual content of a live video feed, not just its color histogram.

The current production toolkit

Stable Diffusion with LoRA fine-tuning is the workhorse for most real-time ML generative work in 2026. It runs fast enough, it's open enough to customize, and the community around it has built enough tooling that a production team can stand up a custom model for a specific installation in days rather than months.

Supporting tools: ComfyUI for pipeline composition, Automatic1111 for prototyping, TouchDesigner's ML operators for integration into existing node-graph workflows, Runway and Modal for hosted inference when on-device isn't practical. The stack is still consolidating but it's usable.

Failure modes

- Hallucination at the edges. ML models generate confident nonsense outside their training distribution. Input sanitization matters.

- Temporal incoherence. Frames don't always connect smoothly, which shows up as flicker. Techniques like ControlNet conditioning and temporal consistency LoRAs help.

- Hardware variability. An installation that runs on a specific GPU may not run on a slightly different one without quality regressions.

- Content liability. ML models can generate things the installation doesn't want to show. Guardrails and content filters need to be a first-class part of the design.

The craft question

There's a legitimate question about what it means to call something "generative art" when the generation is a trained model rather than a hand-written algorithm. The old generative art world - Vera Molnar, Manfred Mohr, early Casey Reas - was about finding beauty in explicit rules. The ML generative world is about sampling a trained distribution. They're different practices, and both are valid, but they aren't the same thing.

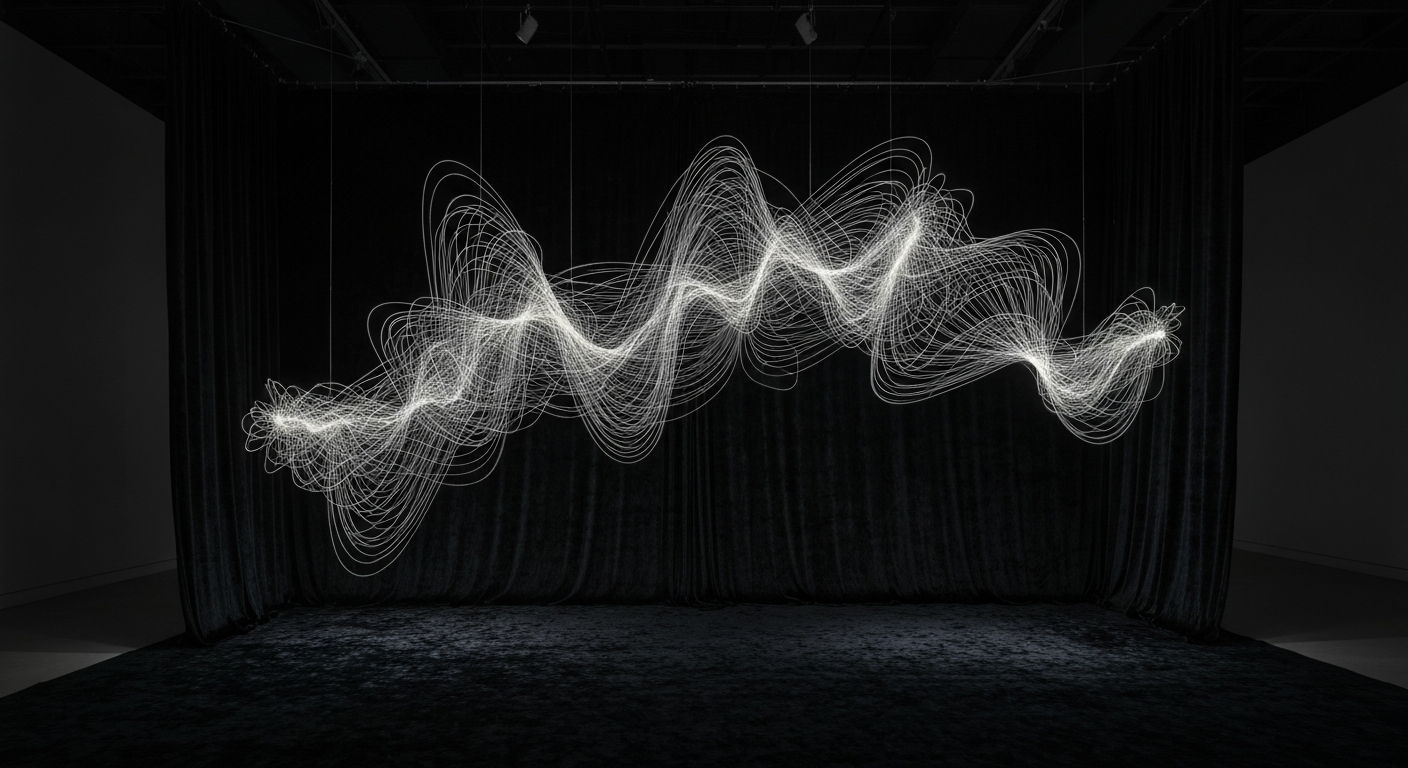

Artists who want to call their work generative in the tradition of the form should be thoughtful about the distinction. Artists who want to embrace ML for what it is have the better tool for certain effects. The most interesting work is increasingly hybrid - rule-based structure with ML-generated imagery inside it, or the reverse.

Shaders are math finding beauty. ML is data finding meaning. Both have a place in the medium; conflating them is the fastest way to produce bad work with either tool.