A room that responds to the people in it feels magical in a way a touchscreen cannot. The craft of making that happen isn't magic, though - it's a specific toolkit of sensors, software, and interaction design. Here's a practical tour of the sensor tech that's become viable for interactive installations in the last few years.

LiDAR is surprisingly good now

LiDAR - laser-based depth sensing - has dropped in price and improved in resolution enough that a 2D "sheet" LiDAR scanning a floor plane is a practical way to track people walking through a space. A Sick, Leuze, or Hokuyo sensor mounted above a room can output a real-time map of where everyone is, how fast they're moving, and where they're clustering. The data feeds cleanly into TouchDesigner or Unity, and the installation can respond to crowd density, proximity to a feature, or approach angle without anyone touching anything.

The constraints: LiDAR is anonymous (you know someone is at position X but you don't know who), it needs calibration to the room geometry, and it struggles with very dense crowds where individual tracking breaks down. For installations that want to react to the feel of the room rather than identify specific visitors, it's ideal.

Cameras are cheaper and smarter than they were

A single industrial USB camera plus a modest GPU is enough for most 2D computer-vision needs in 2026. Body-pose tracking (BlazePose, MoveNet, MediaPipe), hand tracking, and face tracking are all real-time-ready on consumer hardware. For installations that want to track a specific visitor's arm movement, mimic a gesture, or recognize a pose, camera-based CV is the fastest way there.

Multi-camera arrays add robustness - if one camera loses a subject to an occlusion, another camera can fill in. For installations spanning large areas, a grid of four to eight cameras outperforms a single higher-resolution one.

Depth cameras bridge the gap

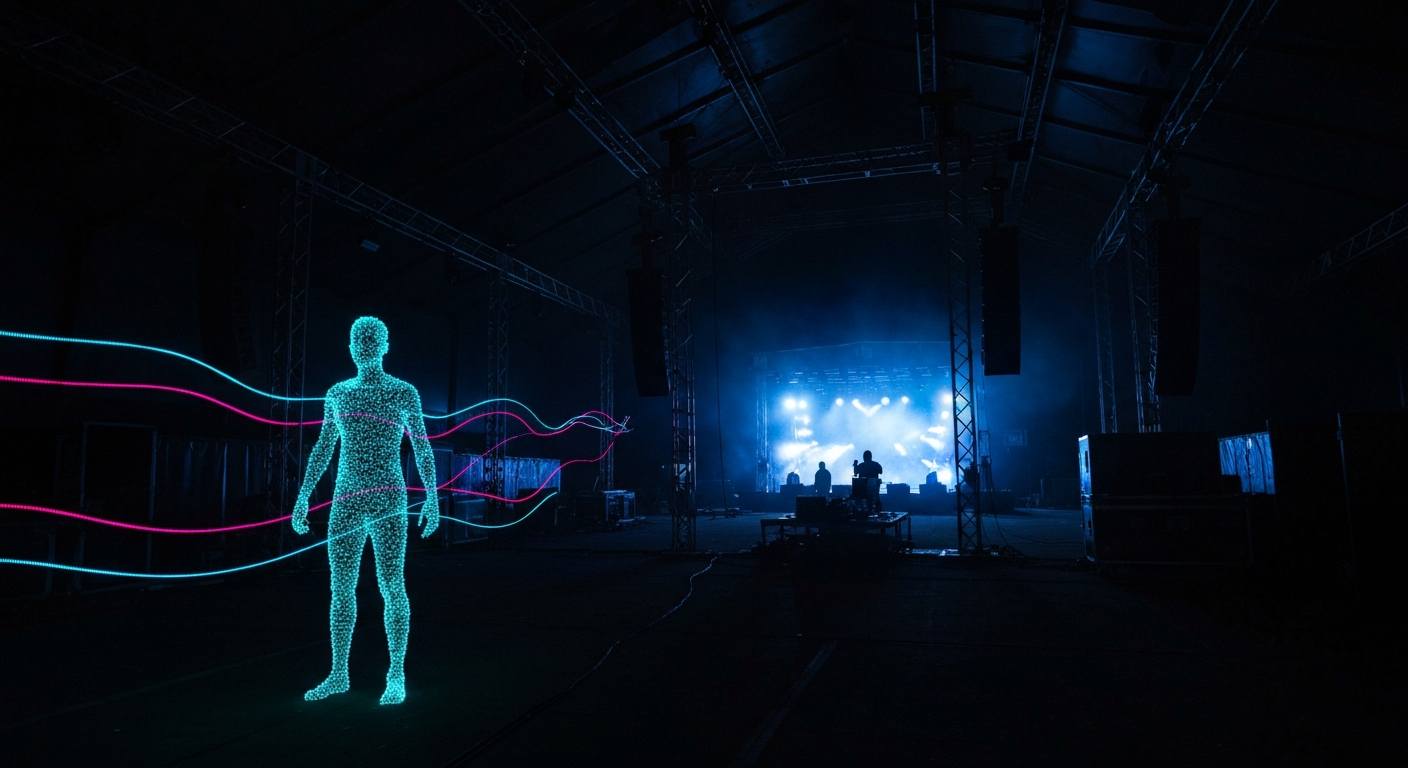

Intel RealSense (recently deprecated but still widely available), Azure Kinect (also deprecated but in use), and Stereolabs ZED cameras give you a depth map alongside the RGB image. The depth map is what makes background segmentation, gesture recognition, and 3D skeleton tracking reliable in messy real-world lighting. When an installation needs to track a person in a changing lighting environment - a lobby, a festival tent, a room with audience milling about - depth cameras are the right sensor.

The software side

TouchDesigner is the most common choice for hooking sensors into an installation. Native support for video input, OSC, serial, NDI, and most common CV libraries, plus the ability to drop Python in wherever the node graph needs to call something specific. For installations where the CV is heavy - running a pose model at 60 fps, processing multi-camera streams - a separate CV machine running Python or a compiled binary talking to TouchDesigner over OSC is a common pattern.

Unity and Unreal can host the sensor pipeline themselves, but usually the production rhythm is smoother if the sensor layer lives in a separate process that speaks a simple protocol. Keeping the visual runtime ignorant of sensor specifics makes the installation easier to maintain when a sensor gets replaced or upgraded.

Interaction design: the hard part

The hardware is easy compared to the interaction design. A room that responds to the audience needs to respond at a rate the audience can feel - not too fast, not too slow. The magic threshold is usually between 100 and 400 milliseconds of reaction time. Faster feels jumpy; slower feels broken.

Second lesson: responses should be subtle enough that a naive visitor doesn't immediately "figure out the trick." An installation that screams "YOU MOVED, I CHANGED" is less magical than one that shifts in ways the visitor feels rather than sees. The craft is in how the response is expressed, not in the sensor that detected the input.

The best interactive installations never tell you they're interactive. You just feel the room watching you and then, later, you realize the watching was the point.

What's coming next

Three trends worth watching. First, WiFi and Bluetooth-based presence sensing is getting accurate enough for some installations to use phone signals as anonymous crowd data. Second, millimeter-wave radar is starting to appear in high-end interactive work - it can detect breathing and heart rate through walls. Third, small-model LLMs running on-device are making installations that can "understand" what visitors are doing rather than just detect that they're doing something. All three change what the medium can do in the next few years.